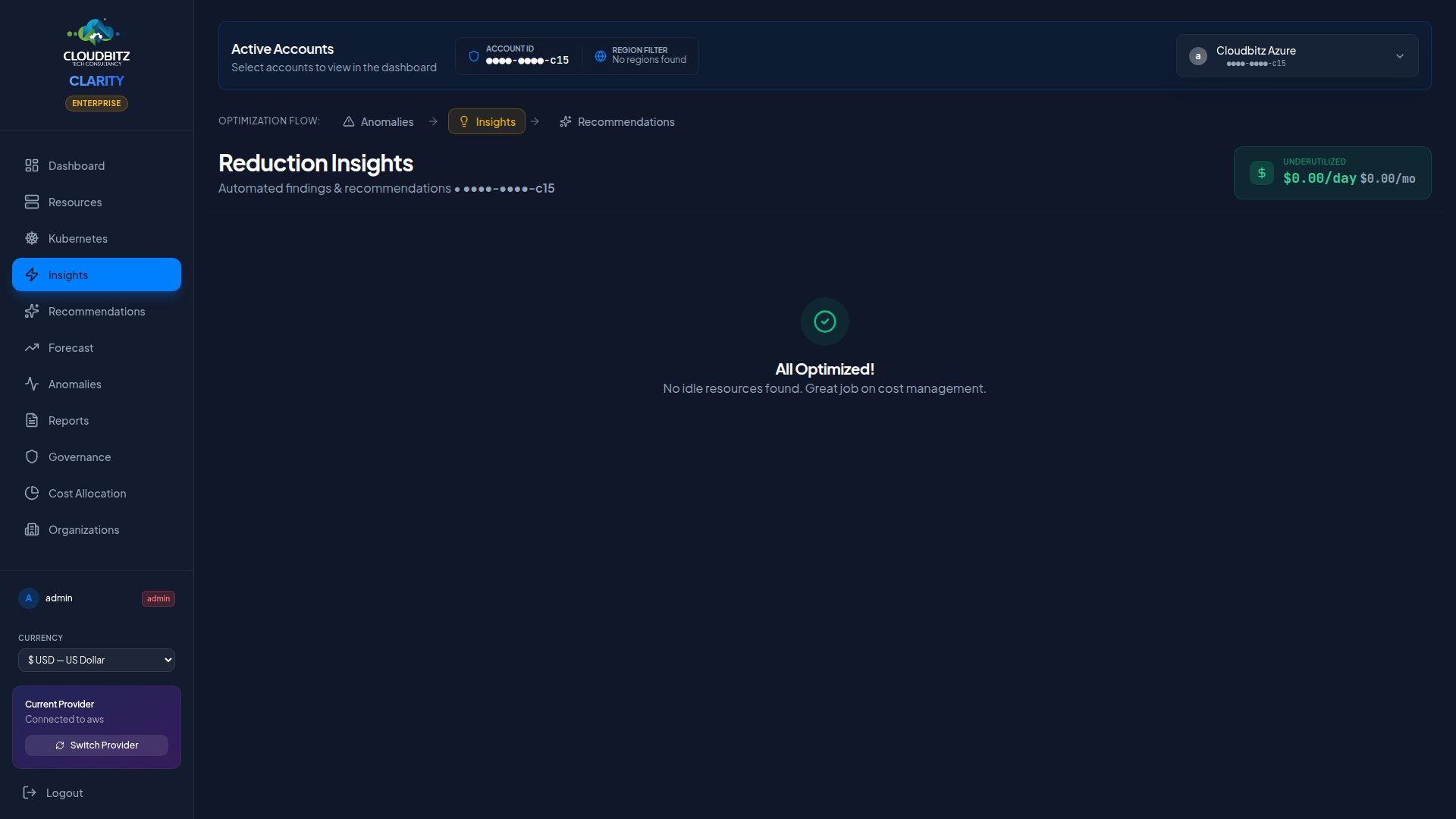

Insights & recommendations

The Insights page is where CLARITY surfaces optimization opportunities across your cloud infrastructure. It combines automated analysis with AI validation to help you reduce waste and right-size your resources.

Single unified feed

Findings from every rule in the engine — idle resources, underutilized capacity, oversized instances, missing tags, commitment opportunities, lifecycle gaps, and more — appear in one feed grouped by category. No more separate "System Findings" and "Underutilized Resources" sections to reconcile.

Each card carries:

- Severity badge —

critical/high/medium/low - Status pill — what kind of optimization this is (see below)

- Service / region / instance type — context badges

- Age — days since the finding was first detected (escalates colour after 7d / 30d to surface stale unresolved items)

- Cost-center pill — which business owner the finding rolls up to (when business mapping is configured)

- AI validation pill —

Agree/Disagree/Partial(when AI analysis is enabled) - Estimated monthly savings — if applicable

Status pills

The status pill names the kind of optimization at a glance, regardless of which provider rule produced the finding:

| Status | Meaning |

|---|---|

| Idle | Provisioned but unused — typically <5% utilization or zero traffic |

| Underutilized | In use but well below provisioned capacity — typically 5-30% utilization |

| Oversized | Capacity exceeds workload requirements — right-size to a smaller tier |

| Untagged | Resource lacks tags needed for chargeback and ownership attribution |

| RI/SP/CUD opportunity | Sustained usage suggests a Reserved Instance, Savings Plan, or Committed Use Discount would lower the bill |

| Lifecycle policy | Storage object retention/transition rule missing — old data accumulating at hot-tier price |

| Security hardening | Security control missing (WAF, public access, etc.) that may also drive cost |

| Data transfer | Inter-region or egress traffic is a meaningful share of the bill |

| Network | Networking resource (NAT, VPC endpoint, IP) configuration suggests savings |

| Anomaly | Recurring cost-pattern deviation from baseline |

| API cost | Provider-side metered API usage (CE pagination, BigQuery scans) |

Filter chips

A row of multi-select filter chips above the feed lets you narrow what you see without losing the totals:

- Status — pick one or more statuses (Idle, Untagged, etc.)

- Severity —

critical/high/medium/low - Category — Compute / Database / Storage / Networking / Containers / Machine Learning / Analytics / Web / Governance / Commitments / Monitoring / Other

Each chip shows the unfiltered count (e.g. Idle (6), Critical (5)) so you can see what each filter would surface before clicking. Chips that have zero findings on the current account are hidden — no clutter for filters with nothing to do. Filters AND across dimensions, so picking Status: Idle + Severity: Critical + Category: Database lands you on idle critical database findings only. A Clear filters link appears whenever any chip is active.

Minimum Cost Threshold

Only resources costing more than $10/month are flagged. Low-cost resources are excluded to keep the signal-to-noise ratio high.

Tag coverage gate

A tag-coverage warning sits at the top of the page when your account is below the 80% coverage threshold. Untagged resources distort every savings number on the page — chargeback rolls them into "Shared Infrastructure" instead of their real owner, and right-sizing recommendations can't be attributed to a team.

The warning shows the gap (e.g. 73.0% on demo-aws-587432109876 — chargeback accuracy will be reduced), how much spend it represents (12% of spend untagged), and links to the Tag Health tab for remediation. Dismissing it collapses to a small "tag warning hidden — click to show" pill at the top of the page; click the pill to bring back the full panel.

Provider-Specific insights

Each cloud provider has unique optimization opportunities:

AWS

- NAT Gateway optimization — Identify expensive NAT traffic that could use VPC endpoints

- ECR lifecycle policies — Flag repositories without image cleanup rules

- EBS volume optimization — Detect unattached or underutilized volumes

- ECS/Fargate right-sizing — Analyze container CPU and memory reservations

Azure

- Stopped VM billing — Deallocated VMs that still incur disk charges

- Unattached managed disks — Orphaned disks with no associated VM

- App Service plan consolidation — Underutilized plans that could be merged

GCP

- HA in development — High-availability configurations on non-production workloads

- Persistent disk snapshots — Old snapshots consuming storage unnecessarily

- Idle Cloud SQL instances — Databases with minimal query activity

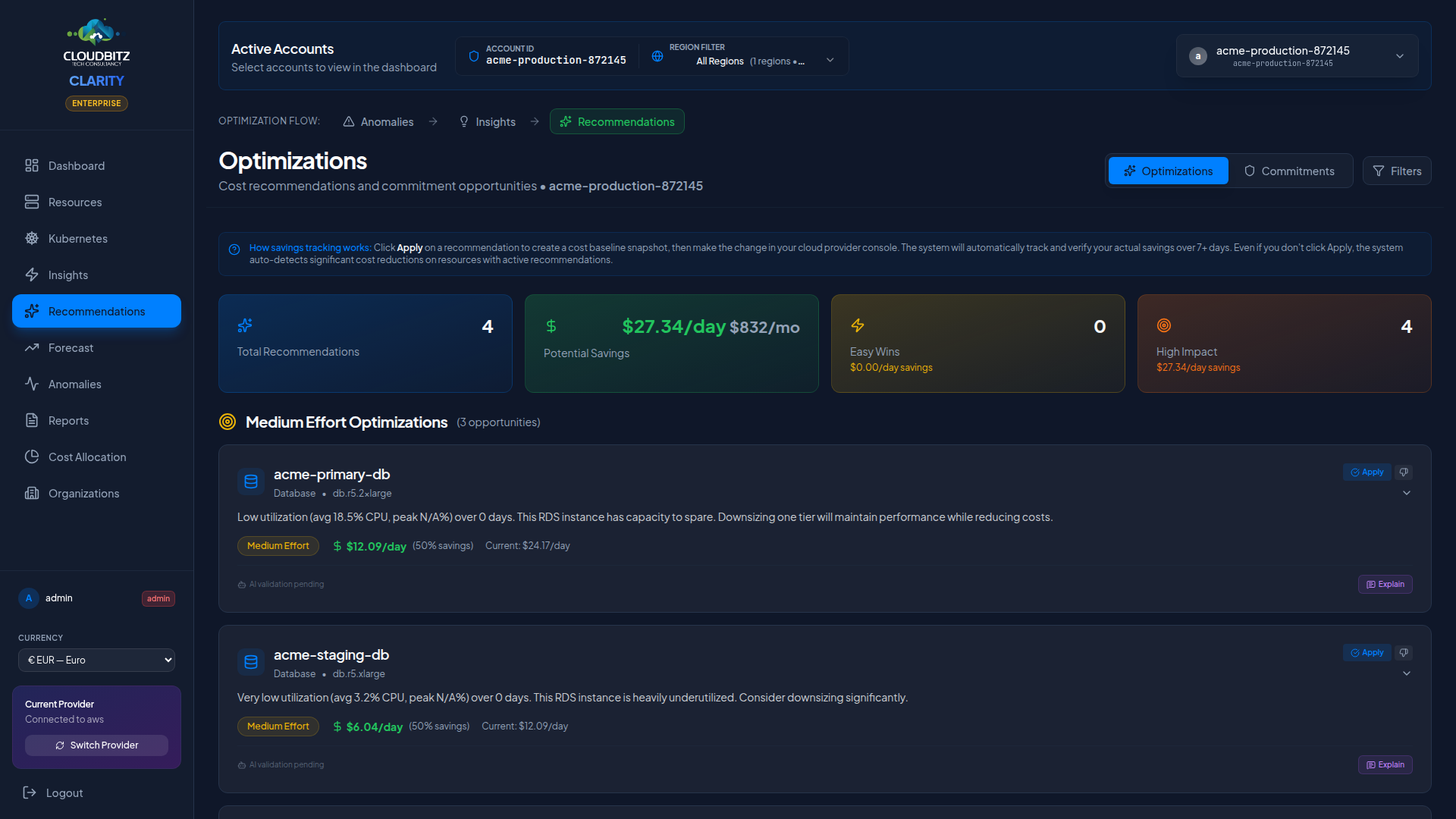

Recommendation types

Right-Sizing

Suggests a smaller (or larger) instance type based on observed CPU, memory, and network utilization. Each recommendation shows:

- Current instance type and cost

- Recommended instance type and cost

- Estimated monthly savings

- Utilization metrics that support the recommendation

Commitment purchase

Recommends Reserved Instances, Savings Plans, or Committed Use Discounts based on your steady-state usage. See the Commitments page for details.

Resource cleanup

Identifies resources that can be safely deleted:

- Unattached storage volumes

- Old snapshots beyond retention policy

- Unused Elastic IPs or static addresses

- Idle load balancers with no targets

Effort classification

Each recommendation includes an effort estimate:

| Effort | Description | Examples |

|---|---|---|

| Low | Can be done in minutes, minimal risk | Delete unattached disk, remove old snapshot |

| Medium | Requires testing or a maintenance window | Right-size a VM, modify an instance type |

| High | Involves architecture changes or migration | Move to Spot instances, consolidate services |

Savings estimation

Every recommendation includes an estimated monthly savings figure. Savings are calculated using:

- Current resource cost (from billing data or pricing catalog)

- Recommended resource cost (from the provider's pricing)

- The difference, projected to a monthly figure

WARNING

Savings estimates are projections based on current usage patterns. Actual savings may vary if workload characteristics change after implementation.

AI validation

When AI analysis is enabled, each insight is validated by an AI model that reviews the underlying data and context. Validation results appear as badges:

| Badge | Meaning |

|---|---|

| Agree | AI confirms the recommendation is sound |

| Disagree | AI found reasons the recommendation may not apply |

| Partial | AI agrees with the finding but suggests a different action |

Explain button

Click the Explain button on any insight to get an AI-generated explanation that covers:

- Why this resource was flagged

- What the usage data shows

- Whether the recommendation is safe to implement

- Any risks or caveats to consider

TIP

AI explanations are cached for 24 hours. Subsequent clicks return the cached explanation instantly.